When asked to name a linguistically diverse place, I would have said Papua New Guinea, and if asked to name a stereotypically monolingual country, I would have named the USA. However, this recent report from the New York Times suggests that, due to its large immigrant population, New York harbours more endangered languages than anywhere else on Earth (tipped off from Edinburgh University’s Lang Soc Blog). From a field linguists’ point of view this may make discovery of and access to minority languages much easier (although may mean the end of exotic holidays). From a cultural evolution point of view, a more global community may mean a radically different kind of competition between languages. Nice video below:

Tag: New York

Linguistic diversity and traffic accidents

![]() I was thinking about Daniel Nettle’s model of linguistic diversity which showed that linguistic variation tends to decline even with a small amount of migration between communities. I wondered if statistics about population movement would correlate with linguistic diversity, as measured by the Greenberg Diversity Index (GDI) for a country (see below). However, this is a cautionary tale about obsession and use of statistics. (See bottom of post for link to data).

I was thinking about Daniel Nettle’s model of linguistic diversity which showed that linguistic variation tends to decline even with a small amount of migration between communities. I wondered if statistics about population movement would correlate with linguistic diversity, as measured by the Greenberg Diversity Index (GDI) for a country (see below). However, this is a cautionary tale about obsession and use of statistics. (See bottom of post for link to data).

Continue reading “Linguistic diversity and traffic accidents”

The Interrogative Mood: A Novel?

Just a quick post in case folks haven’t heard about this already, I got a copy for Christmas.

‘The Interrogative Mood: A Novel?’ by Padgett Powell – A book composed entirely of interrogative sentences, or rather, questions.

It has been hailed as a pioneering yet risky step in the author’s somewhat turbulent writing career, but has received praise from a puzzled many people who have found an unexpected enjoyment and intrigue in its pages, for example:

‘How this book works is beyond me, but, miraculously, it does’ (Village Voice)

‘It is a wondrous strange… a hydra-headed reflection of life as it experienced, and of thought as it is felt’ (New York Times Book Review)

The book is not the first of its kind – ‘Gold Fools’ by fellow American novelist Gilbert Sorrentino is also completely written in interrogative sentences, and tells a Western adventure story whilst challenging and questioning genre-specific stereotypes and contemporary linguistic convention.

Even so, Powell’s book is still unique because it was written to achieve a different objective. Unlike ‘Gold Fools’ there is no chronological story to this ‘novel’ – Powell calls upon every sentence forming configuration in English to dispense vast stores of accrued knowledge, factual information, tantalising and mysterious hints about himself, his memories, and his life. Some interrogatives are curt and challenging, where as others span the length of paragraphs and pages thanks to some pretty serious sentence embedding. Through an agreeable barrage of dos, ifs, ares and all the WH-words, Powell not only covertly feeds us information about himself, but forces us to think deep into the worlds of ourselves and those around us. He presents the reader with moral dilemmas interspersed with comparably routine queries, encouraging us to consider how we might behave faced with a variety of arbitrary and significant choices, highlighting both humorous and perturbing inconsistencies in every arena of life.

Direction and premeditated structure is not immediately apparent in the novel (something to be examined, maybe?) and this works as a selling point. The reader is engaged by Powell’s gentle and inquisitive bullying which encourages self examination, reflection, and increased time spent on Wikipedia trying to source some obscure reference or fact.

A warning though – reading this book hijacks the internal narrative, forcing you to think almost entirely in interrogatives for a good few hours afterwards.

Animal Signalling Theory 101 – The Handicap Principle

One of the most important concepts in animal signalling theory, proposed by Amotz Zahavi in a seminal 1975 paper and in later works (Zahavi 1977; Zahavi & Zahavi 1997), is the handicap principle. A general definition is that females have evolved mating preferences for males who display exaggerated ornaments or behaviours that are costly to maintain and develop, and that this cost ensures an ‘honest’ signal of male genetic quality.

As a student I found it quite difficult to identify a working definition for this important type of signal mainly due to the apparent ‘coining fest’ that has taken place over the years since Zahavi outlined his original idea in 1975. For this reason, I have decided to provide a brief outline of the terminological and conceptual differences that exist in relation to the handicap principle in an attempt to help anyone who might be struggling to navigate the literature.

As Zahavi did not define the handicap principle mathematically, a number of interpretations can be found in the key literature due to scholars disagreeing as to the true nature of his original idea. Until John Maynard Smith and Harper simplified and clarified things wonderfully in their 2003 publication Animal Signals, to my knowledge at least four different interpretations of the handicap were being used and explored empirically and through mathematical modelling, each with distinct differences that aren’t all that obvious to grasp without delving into the maths.

Continue reading “Animal Signalling Theory 101 – The Handicap Principle”

The 20th Anniversary of Steven Pinker & Paul Bloom: Natural Language and Natural Selection (1990)

“a post on Pinker and Bloom’s original paper, and how the field has developed over these last twenty years, at some point in the next couple of weeks,”

Marc Hauser investigated for scientific misconduct

The Boston Globe reported today that Marc Hauser is on leave due to scientific misconduct . The Great Beyond summarises the article as follows:

The trouble centers on a 2002 paper published in the journal Cognition (subscription required). Hauser was the first author on the paper, which found that cotton-top tamarins are able to learn patterns – previously thought to be an important step in language acquisition. The paper has been retracted, for reasons which are reportedly unclear even to the journal’s editor, Gerry Altmann.

Two other papers, a 2007 article in Proceedings of the Royal Society B and a 2007 Science paper, were also flagged for investigation. A correction has been published on the first, and Science is now looking into concerns about the second. And the Globe article highlights other controversies, including a 2001 paper in the American Journal of Primatology, which has not been retracted although Hauser himself later said he was unable to replicate the results. Findings in a 1995 PNAS paper were also questioned by an outside researcher, Gordon Gallup of the State University of New York at Albany, who reviewed the original data and said he found “not a thread of compelling evidence” to support the paper’s conclusions.

Hauser has taken a year-long leave from the university.

Continue reading “Marc Hauser investigated for scientific misconduct”

Time Travel, Dreams and The Origin of Knowledge

I’ve been attending a weekly seminar on the Metaphysics of Time Travel, given by Alasdair Richmond. Yesterday, he was talking about the way knowledge arises in causal chains. Popper (1972 and various others) argues that “Knowledge comes into existence only by evolutionary, rational processes” (quoted from Paul Nahin, ‘Time Machines: Time Travel in Physics, Metaphysics and Science Fiction, New York, American Institute of Physics, 1999: 312). Good news for us scholars of Cultural Evolution. However, Richmond also talked about the work of David Lewis on the nature of causality. There are three ways that causal chains can be set up:

The first is an infinite sequence of events each caused by the previous one. For example, I’m typing this blog because my PhD work is boring, I’m doing a PhD because I was priced in by funding, I applied for funding because everyone else did … all the way back past my parents meeting and humans evolving etc.

The second option is for a finite sequence of events – like the first option, but with an initial event that caused all the others, like the big-bang.

The third option is a circular sequence of events. In this, A is caused by B which is caused by A. For instance, I’m writing doing a PhD because I got funding and I got funding because I’m doing a PhD, because I got funding. There is no initial cause, the states just are. This third option seems really odd, not least because it involves time-travel. Where do the states come from? However, argues Lewis, they are no more odd than any of the other two options. Option one has a state with no cause and option two has a cause for every event but no original cause. So, how on earth can we get at the origin of knowledge if there is no logical possibility of determining the origin of any sequence of events?

One answer is just to stop caring after a certain point. Us linguists are unlikely to get to the point where we’re studying vowel shifts in the first few seconds of the big bang.

The other answer is noise. Richmond suggested that ‘Eureka’ moments triggered by random occurrences, for instance (Nicholas J. J. Smith, ‘Bananas Enough for Time Travel?’, British Journal for the Philosophy of Science, Vol. 48, 1997: 363-89). mishearing someone or a strange dream, could create information without prior cause.

Spookily, the idea I submitted for my PhD application came to me in a dream.

What Makes Humans Unique? (II): Six Candidates for What Makes Human Cognition Uniquely Human

![]() What makes humans unique? This never-ending debate has sparked a long list of proposals and counter-arguments and, to quote from a recent article on this topic,

What makes humans unique? This never-ending debate has sparked a long list of proposals and counter-arguments and, to quote from a recent article on this topic,

“a similar fate most likely awaits some of the claims presented here. However such demarcations simply have to be drawn once and again. They focus our attention, make us wonder, and direct and stimulate research, exactly because they provoke and challenge other researchers to take up the glove and prove us wrong.” (Høgh-Olesen 2010: 60)

In this post, I’ll focus on six candidates that might play a part in constituting what makes human cognition unique, though there are countless others (see, for example, here).

One of the key candidates for what makes human cognition unique is of course language and symbolic thought. We are “the articulate mammal” (Aitchison 1998) and an “animal symbolicum” (Cassirer 2006: 31). And if one defining feature truly fits our nature, it is that we are the “symbolic species” (Deacon 1998). But as evolutionary anthropologists Michael Tomasello and his colleagues argue,

“saying that only humans have language is like saying that only humans build skyscrapers, when the fact is that only humans (among primates) build freestanding shelters at all” (Tomasello et al. 2005: 690).

Language and Social Cognition

According to Tomasello and many other researchers, language and symbolic behaviour, although they certainly are crucial features of human cognition, are derived from human beings’ unique capacities in the social domain. As Willard van Orman Quine pointed out, language is essential a “social art” (Quine 1960: ix). Specifically, it builds on the foundations of infants’ capacities for joint attention, intention-reading, and cultural learning (Tomasello 2003: 58). Linguistic communication, on this view, is essentially a form of joint action rooted in common ground between speaker and hearer (Clark 1996: 3 & 12), in which they make “mutually manifest” relevant changes in their cognitive environment (Sperber & Wilson 1995). This is the precondition for the establishment and (co-)construction of symbolic spaces of meaning and shared perspectives (Graumann 2002, Verhagen 2007: 53f.). These abilities, then, had to evolve prior to language, however great language’s effect on cognition may be in general (Carruthers 2002), and if we look for the origins and defining features of human uniqueness we should probably look in the social domain first.

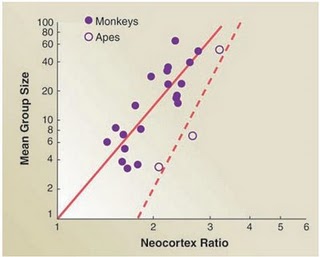

Corroborating evidence for this view comes from comparisons of brain size among primates. Firstly, there are significant positive correlations between group size and primate neocortex size (Dunbar & Shultz 2007). Secondly, there is also a positive correlation between technological innovation and tool use – which are both facilitated by social learning – on the one hand and brain size on the other (Reader and Laland 2002). Our brain, it seems, is essential a “social brain” that evolved to cope with the affordances of a primate social world that frequently got more complex (Dunbar & Shultz 2007, Lewin 2005: 220f.).

Thus, “although innovation, tool use, and technological invention may have played a crucial role in the evolution of ape and human brains, these skills were probably built upon mental computations that had their origins and foundations in social interactions” (Cheney & Seyfarth 2007: 283).

What Makes Humans Unique? (I): The Evolution of the Human Brain

Hello! This is my first post here at Replicated Typo and I thought I’d start with reposting a slightly modified version of a three-part series on the evolution of the human mind that I did last year over at my blog Shared Symbolic Storage.

So in this and my next posts I will have a look at how human cognition evolved from the perspective of cognitive science, especially ‘evolutionary linguistics,’ comparative psychology and developmental psychology.

In this post I’ll focus on the evolution of the human brain.

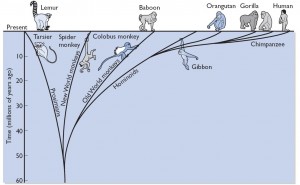

Human Evolution

We are evolved primates. (As are all other primates of course. So maybe it is better to say that we, like all other primates, are evolved beings with a unique set of specializations, adaptations and features. )

In our lineage, we share a common ancestor with orangutans (about 15 million years ago (mya)), gorillas (about 10mya), and most recently, chimpanzees and bonobos (5 to 7 mya). We not only share a significant amount of DNA with our primate cousins, but also major anatomical features (Gazzaniga 2008: 51f., Lewin 2005: 61) These include, for example, our basic skeletal anatomy, our facial muscles, or our fingernails (Lewin 2005: 218ff.).

What most distinguishes us as humans on an anatomical level are our bizarre hair distribution, our upright posture and the skeletal modifications necessary for it, including a propensity for endurance running, our opposable thumbs, fat deposits that are unusually extensive (Preuss 2004: 5), and an intestinal tract only 60% the size expected of primates our size (Gibbons 2007: 1558).

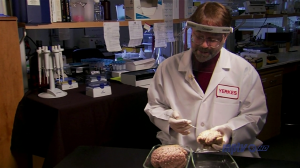

Finally, there is also a distinguishing feature that is a much more remarkable violation of expectations – a brain three times the size expected of a primate our size. This is all the more interesting as primates are already twice as encephalized as other mammals (Lewin 2005: 217). A direct comparison shows this difference in numbers: Whereas human brains have an average volume of 1251.8 cubic centimetres and weigh about 1300 gram, the brains of the other great apes only have an average volume of 316.7 cubic centimetres and weigh between 350-500 gram (Rilling 2006: 66, Preuss 2004: 8). In a human brain, there are approximately a hundred billion neurons, each of which is connected to about one thousand other neurons, comprising about one hundred trillion synaptic connections (Gazzaniga 2008: 291). If you would count all the connections in the napkin-sized cortex alone, you’d only be finished after 32 million years (Edelman 1992: 17).

Finally, there is also a distinguishing feature that is a much more remarkable violation of expectations – a brain three times the size expected of a primate our size. This is all the more interesting as primates are already twice as encephalized as other mammals (Lewin 2005: 217). A direct comparison shows this difference in numbers: Whereas human brains have an average volume of 1251.8 cubic centimetres and weigh about 1300 gram, the brains of the other great apes only have an average volume of 316.7 cubic centimetres and weigh between 350-500 gram (Rilling 2006: 66, Preuss 2004: 8). In a human brain, there are approximately a hundred billion neurons, each of which is connected to about one thousand other neurons, comprising about one hundred trillion synaptic connections (Gazzaniga 2008: 291). If you would count all the connections in the napkin-sized cortex alone, you’d only be finished after 32 million years (Edelman 1992: 17).

Expensive Tissue

The human brain is also extremely “expensive tissue” (Aiello & Wheeler 1995): Although it only accounts for 2% of an adult’s body weight, it accounts for 20-25% of an adult’s resting oxygen and energy intake (Attwell & Laughlin 2001: 1143). In early life, the brain even makes up for up 60-70% of the body’s total energy requirements. A chimpanzee’s brain, in comparison, only consumes about 8-9% of its resting metabolism (Aiello & Wells 2002: 330). The human brain’s energy demands are about 8 to 10 times higher than those of skeletal muscles (Dunbar & Shultz 2007: 1344), and, in terms of energy consumption, it is equal to the rate of energy consumed by leg muscles of a marathon runner when running (Attwell & Laughlin 2001: 1143). All in all, its consumption rate is only topped by the energy intake of the heart (Dunbar & Shultz 2007: 1344).

Consequently, if we want to understand the evolutionary trajectory that led to human cognition there is the problem that

“because the cost of maintaining a large brain is so great, it is intrinsically unlikely that large brains will evolve merely because they can. Large brains will evolve only when the selection factor in their favour is sufficient to overcome the steep cost gradient“ (Dunbar 1998: 179).

This is especially important for people who want to come up with an “adaptive story” of how our brain got so big: they have to come up with a strong enough selection pressure operative in the Pleistocene “environment of evolutionary adaptedness” that would have allowed such “expensive tissue” to evolve in the first place (Bickerton 2009: 165f.).

What About the Brain is Uniquely Human?

If we look to the brain for possible hints, we first find that presently, there is “no good evidence that humans do, in fact, possess uniquely human cortical areas” (although the jury is still out) (Preuss 2004: 9). In addition, we find that there are functions specific to humans which are represented in areas homologous to areas of other primates. Instead, it seems that in the course of human evolution some of the areas of the brain expanded disproportionally, “especially higher-order cortical areas, including the prefrontal cortex” (Preuss 2004: 9, Deacon 1998: 435-438). This means that humans are not simply ‘better’ at thinking than other animals, but that they think differently (Preuss 2004: 7). The expansion and apparent specializations of only certain kinds of neuronal areas could indicate a qualitative shift in neuronal activity brought about by re-organization of existing features, leading to a wholly different style of cognition (Deacon 1998: 435-438 Rilling 2006: 75).

This scenario squares well with what we know about the way evolution works, namely that it always has to work with the raw materials that are available, and constantly co-opts and tinkers with existing structures, at times producing haphazard, cobbled-together, but functional results (Gould & Lewontin 1979, Gould & Vrba 1982). Given the relatively short time span for the evolution of the “most complex structure in the know universe”, as it is sometimes referred to, we have to acknowledge how preciously little time the evolutionary process had for ‘debugging.’ It could well be that make the human mind is so unique because it is an imperfect ‘Kluge:’ “a clumsy or inelegant – yet surprisingly effective – solution to a problem,” like the Apollo 13 CO2 filter or an on-the-spot invention by MacGyver (Marcus 2008: 3f.). It may thus well turn out that what we think makes us so special is a mental “oddity of our species’ way of understanding” the world around us (Povinelli & Vonk 2003: 160). It is reasonable then to assume that human cognition did not just simply get better across the board, but that instead we owe our unique style of thinking to quite specific specializations of the human mind.

With this in mind, we can now ask the question how these neurological differences must translate into psychological differences. But this is where the problem starts: Which features really distinguish us as humans and which are more derivative than others? A true candidate for what got uniquely human cognition off the ground has to pass this test and solve the problem how such “expensive tissue” could evolve in the first place.

In my next post I will have a look at six candidates for what makes human cognition unique.

References:

Aiello, L., & Wheeler, P. (1995). The Expensive-Tissue Hypothesis: The Brain and the Digestive System in Human and Primate Evolution Current Anthropology, 36 (2) DOI: 10.1086/204350

Aiello, L., & Wells, J. (2002). ENERGETICS AND THE EVOLUTION OF THE GENUS HOMO Annual Review of Anthropology, 31 (1), 323-338 DOI: 10.1146/annurev.anthro.31.040402.085403

Attwell, David and Simon B. Laughlin. (2001.) “An Energy Budget for Signaling in the Grey Matter of the Brain.” Journal of Cerebral Blood Flow and Metabolism 21:1133–1145.

Bickerton, Derek (2009): Adams Tongue: How Humans Made Language. How Language Made Humans. New York: Hill and Wang.

Deacon, Terrence William (1997). The Symbolic Species. The Co-evolution of Language and the Brain. New York / London: W.W. Norton.

Dunbar, Robin I.M. (1998): “The Social Brain Hypothesis Evolutionary Anthropology 6: 178-190.

Dunbar, R., & Shultz, S. (2007). Evolution in the Social Brain Science, 317 (5843), 1344-1347 DOI: 10.1126/science.1145463

Edelman, Gerald Maurice (1992) Bright and Brilliant Fire: On the Matters of the Mind. New York: Basic Books

Gazzaniga, Michael S. (2008): Human: The Science of What Makes us Unique. New York: Harper-Collins.

Gibbons, Ann. (2007) “Food for Thought.” Science 316: 1558-1560.

Gould, Stephen Jay and Richard Lewontin (1979): “The spandrels of San Marco and the Panglossian paradigm: a critique of the adaptationist programme.” Proclamations of the Royal. Society of London B: Biological Sciences 205 (1161): 581–98.

Gould, Stephen Jay, and Elizabeth S. Vrba (1982), “Exaptation — a missing term in the science of form.” Paleobiology 8 (1): 4–15.

Lewin, Roger (2005): Human Evolution: An Illustrated Introduction. Oxford: Blackwell.

Marcus, Gary (2008): Kluge: The Haphazard Evolution of the Human Mind. London: Faber and Faber.

Povinelli, Daniel J. and Jennifer Vonk (2003): “Chimpanzee minds: Suspiciously human?” Trends in Cognitive Sciences, 7.4, 157–160.

Preuss Todd M. (2004): What is it like to be a human? In: Gazzaniga MS, editor. The Cognitive Neurosciences III, Third Edition. Cambridge, MA: MIT Press: 5-22.

Rilling, J. (2006). Human and nonhuman primate brains: Are they allometrically scaled versions of the same design? Evolutionary Anthropology: Issues, News, and Reviews, 15 (2), 65-77 DOI: 10.1002/evan.20095

Some Links #11: Linguistic Diversity or Homogeneity?

Linguistic Diversity = Poverty. Razib Khan basically argues, correctly in my opinion, that linguistic homogeneity is good for economic development and general prosperity. From the perspective of a linguist, however, I do like the idea of really obscure linguistic communities, ready and waiting to be discovered and documented. On the flip side, it is selfish of me to want these small communities to remain in a bubble, free from the very same benefits I enjoy in belonging to a modern, post-industrialised society. Our goal, then, should probably be more focused on documenting, as opposed to saving, these languages. Razib has recently posted another, quite lengthy post on the topic: Knowledge is not value-free.

When did we first ‘Rock the Mic’? A meeting of my two favourite interests over at the New York Times: Linguistics and Hip Hop. Ben Zimmer writes:

In “Rapper’s Delight,” the M.C. Big Bank Hank raps, “I’m gonna rock the mic till you can’t resist,” using what was then a novel sense of rock, defined by the O.E.D. as “to handle effectively and impressively; to use or wield effectively, esp. with style or self-assurance.” To be sure, singers in the prerap era often used rock as a transitive verb, whether it was Bill Haley promising, “We’re gonna rock this joint tonight,” or the bluesman Arthur “Big Boy” Crudup more suggestively wailing, “Rock me, mama.” But the M.C.’s of early hip-hop took the verb in a new direction, transforming the microphone (abbreviated in rap circles as mic, not mike) into an emblem of stylish display. Later elaborations on the theme would allow clothes and other accessories to serve as the objects of rock, as when Kanye West boasted in a 2008 issue of Spin magazine, “I rock a bespoke suit and I go to Harold’s for fried chicken.”

It’d be nice to see more stuff on linguistics and hip hop, and, having said that, I might write a bit on the subject. In fact, I would go as far as to say that hip hop is part of reason why I fell into linguistics: the eloquent word play encouraged, and perhaps moulded, my fascination with language. To demonstrate why, here’s a track by Maryland rapper, Edan, who certainly knows how to rock the mic:

Life without language. Neuroanthropology provides yet another great read. This time it’s on the topic of life without language — something that’s always crept into my thoughts, yet seems impossible to imagine (as I’m already so embedded within a language-using society). The post goes on to discuss Susan Schaller and the case of a profoundly deaf Mexican immigrant who did not learn sign language:

The man she would call, ‘Ildefonso,’ had figured out how to survive, in part by simply copying those around him, but he had no idea what language was. Schaller found that he observed people’s lips and mouth moving, unaware that they were making sound, unaware that there was sound, trying to figure out what was happening from the movements of the mouths. She felt that he was frustrated because he thought everyone else could figure things out from looking at each others’ moving mouths.

One problem for Schaller’s efforts was that Ildefonso’s survival strategy, imitation, actually got in the way of him learning how to sign because it short-circuited the possibility of conversation. As she puts, Ildefonso acted as if he had a kind of visual echolalia (we sometimes call it ‘echopraxia’), simply copying the actions he saw

One Man’s Take on the Facts of the Matter. Babel’s Dawn takes a look at Tecumseh Fitch’s book, The Evolution of Language, and concisely explains a clear departure between two camps in evolutionary linguistics:

One clear difference between the scenarios is in the role of the individual in relation to language. Language is somehow built into the brain in Chomsky’s thought-first scenario, while it is learned from others in the topics-first approach. Empiricists, like Morten Christiansen and Nicholas Chater, see language as ‘out there’ to be learned while nativists, like Fitch and Chomsky, say there is an internal, I-language, and the language out there is merely the sum of all those little I-languages. How to settle the dispute? Look for factual evidence.