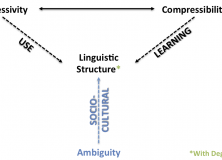

Based on yesterday’s post, where I argued degeneracy emerges as a design solution for ambiguity pressures, a Reddit commentator pointed me to a cool paper by Piantadosi et al (2012) that contained the following quote:

The natural approach has always been: Is [language] well designed for use, understood typically as use for communication? I think that’s the wrong question. The use of language for communication might turn out to be a kind of epiphenomenon… If you want to make sure that we never misunderstand one another, for that purpose language is not well designed, because you have such properties as ambiguity. If we want to have the property that the things that we usually would like to say come out short and simple, well, it probably doesn’t have that property (Chomsky, 2002: 107).

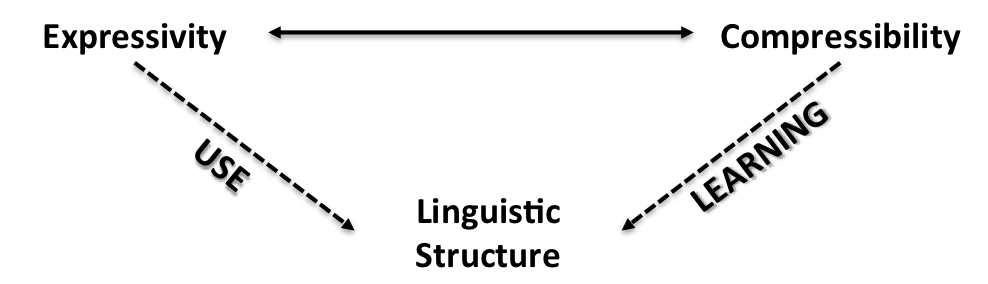

The paper itself argues against Chomsky’s position by claiming ambiguity allows for more efficient communication systems. First of all, looking at ambiguity from the perspective of coding theory, Piantadosi et al argue that any good communication system will leave out information already in the context (assuming the context is informative about the intended meaning). Their other point, and one which they test through a corpus analysis of English, Dutch and German, suggests that as long as there are some ambiguities the context can resolve, then ambiguity will be used to make communication easier. In short, ambiguity emerges as a result of tradeoffs between ease of production and ease of comprehension, with communication systems favouring hearer inference over speaker effort:

The essential asymmetry is: inference is cheap, articulation expensive, and thus the design requirements are for a system that maximizes inference. (Hence … linguistic coding is to be thought of less like definitive content and more like interpretive clue.) (Levinson, 2000: 29).

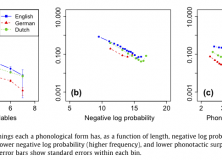

If this asymmetry exists, and hearers are good at disambiguating in context, then a direct result of such a tradeoff should be that linguistic units which require less effort should be more ambiguous. This is what they found in results from their corpus analysis of word length, word frequency and phonotactic probability:

We tested predictions of this theory, showing that words and syllables which are more efficient are preferentially re-used in language through ambiguity, allowing for greater ease overall. Our regression on homophones, polysemous words, and syllables – though similar – are theoretically and statistically independent. We therefore interpret positive results in each as strong evidence for the view that ambiguity exists for reasons of communicative efficiency (Piantadosi et al., 2012: 288).

At some point, I’d like to offer a more comprehensive overview of this paper, but this will have to wait until I’ve read more of the literature. Until then, here’s some graphs of the results from their paper:

Continue reading “Is ambiguity dysfunctional for communicatively efficient systems?”