There is a battle about to commence. A battle in the world of cognitive modelling. Or at least a bit of a skirmish. Two articles to be published in Trends in Cognitive Sciences debate the merits of approaching cognition from different ends of the microscope.

On the side of probabilistic modelling we have Thom Griffiths, Nick Chater, Charles Kemp, Amy Perfors and Joshua Tenenbaum. Representing (perhaps non-symbolically) emergentist approaches are James McClelland, Matthew Botvinick, David Noelle, David Plaut, Timothy Rogers, Mark Seidenberg and Linda B. Smith. This contest is not short of heavyweights.

However, the first battleground seems to be who can come up with the most complicated diagram. I leave this decision to the reader (see first two images).

The central issue is which approach is the most productive for explaining phenomena in cognition. David Marr’s levels of explanation include the ‘computational’ characterisation of the problem, an ‘algorithmic’ description of the problem and an ‘implementational’ explanation which focusses on how the task is actually implemented by real brains. Structured probabilistic takes a ‘top-down’ approach while Emergentism takes a ‘bottom-up’ approach.

Structured approach

The structured probabilistic approach argues that it is better suited to answering questions such as how much information is needed to solve a problem? What representations are required and what are the constraints on learning? Qualitatively different approaches can be applied to different domains and inferences.

Using hierarchies of structure means that the model can be influenced by high-level information. For instance, if you believe dolphins are fish, but then hear someone say “they may look like fish, but they are in fact mammals”, then you may change your mind immediately. The probabilistic team argue that connectionist models can’t incorporate high-level information so easily, and can’t ‘change their minds’ based on little data. They also argue that structured approaches can separate parts of a cognitive problem, for instance learning the structure and strength of a cause and effect. Connectionist models combine these two aspects, and it’s often difficult to interpret how a connectionist model is solving a problem.

Emergent approach

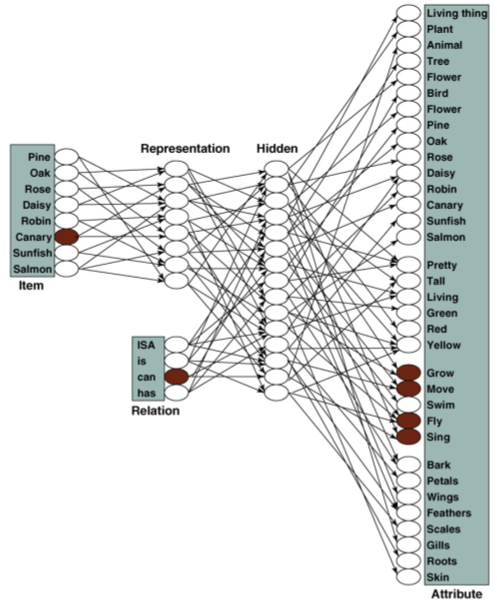

The emergentist camp make three counter-claims. Firstly, they put equal emphasis on the three levels of explanation – computational, algorithmic and implementational. Secondly, the ‘top-down’ approach is in danger or building in inaccurate representations and structures into a theory. Instead of making claims about how cognition is structured, emergentists argue that structure should be allowed to emerge from the data. They note that the brain may not be solving problems optimally, as the structured approach assumes. Below is their diagram of an emergent model of categorisation. It’s a neural network which learns relations between objects and features (click for a bigger view).

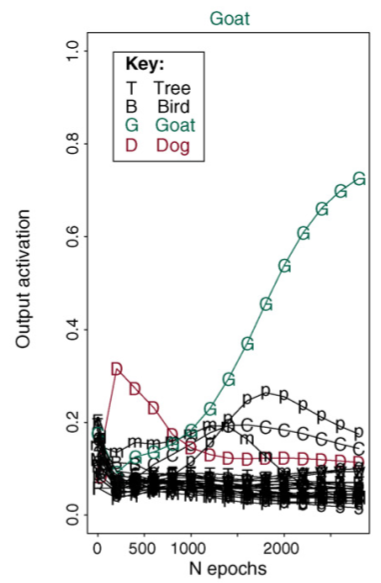

The advantages of the emergentist approach are demonstrated in the extreme in this paper: The next graph is a representation of the output activation of a representation over time. Astoundingly, ‘Goat’ wins out, despite it not being represented at all in the model. Maybe this is evidence for a deep goat-bias in emergent networks? Or a typo.

Finally, the emergentists argue that the structured approach cannot easily account for elements of development. Children exhibit learning curves and reversals in behaviour which can be captured by an emergent process, but not by a model which approaches the problem optimally.

Finally, the emergentists argue that the structured approach cannot easily account for elements of development. Children exhibit learning curves and reversals in behaviour which can be captured by an emergent process, but not by a model which approaches the problem optimally.

Burning both ends of the cognitive candle

I agree with the Emergentists that assuming how the problem is structured is dangerous. Part of my own work involves showing that structured approaches have made assumptions about language learning and transmission that are not upheld when considering bilingualism.

I also disagree with the claim of Griffiths et al. that structured probabilistic models can determine how much information is needed to solve a problem. For instance, take visual navigation problems where an agent must navigate home using visual sensors. Early approaches assumed that you needed an internal map of the environment, an indication of where you were and your uncertainty, representations of headings etc. Research proceeded to approach this problem from a very high-level. However, Zeil, Hofmann & Chahl (2003) showed that you could solve the problem fairly effectively by taking the pixel-by-pixel difference from an image taken at the current location and an image taken at the target location. Importantly, this only worked in real, noisy environments, not artificially sparse labs. This may explain why ants and bees can navigate accurately despite having small brains – there’s enough complexity in the world for low-level systems to utilise.

At the same time, emergent models do make assumptions about the problem in the way they represent the input. In the model above, objects, aspects and relations are divided up already. There’s no way for the model to ‘develop’ new types of relation.

There may also be a Proximate/Ultimate division between the two camps. The emergentist approach is more focussed on the mechanism – how a brain solves the problem. The structured probabilistic approach is more focussed on why the brain solves the problem in the way that it does.

I wonder about how real this debate is. It wasn’t so long ago that Connectionists and Dynamic Systems researchers were at each other’s throats, and yet here they are untied against Bayesian evil. There are models which use both top-down and bottom-up approaches at the same time. Friston’s dynamic expectation-maximisation model uses hierarchies of structured models, but they work like neural nets for learning and Bayesian models for production. In the end, it’s likely that both of these approaches will contribute to the understanding of cognition and the development of AI systems.

Griffiths, T., Chater, N., Kemp, C., Perfors, A., & Tenenbaum, J. (2010). Probabilistic models of cognition: exploring representations and inductive biases Trends in Cognitive Sciences, 14 (8), 357-364 DOI: 10.1016/j.tics.2010.05.004

McClelland, J., Botvinick, M., Noelle, D., Plaut, D., Rogers, T., Seidenberg, M., & Smith, L. (2010). Letting structure emerge: connectionist and dynamical systems approaches to cognition Trends in Cognitive Sciences, 14 (8), 348-356 DOI: 10.1016/j.tics.2010.06.002

Zeil J, Hofmann MI, & Chahl JS (2003). Catchment areas of panoramic snapshots in outdoor scenes. Journal of the Optical Society of America. A, Optics, image science, and vision, 20 (3), 450-69 PMID: 12630831

1 thought on “Top-down vs bottom-up approaches to cognition: Griffiths vs McClelland”