![]() Last week saw the publication of my latest paper, with co-authors Simon Kirby and Kenny Smith, looking at how languages adapt to their contextual niche (link to the OA version and here’s the original). Here’s the abstract:

Last week saw the publication of my latest paper, with co-authors Simon Kirby and Kenny Smith, looking at how languages adapt to their contextual niche (link to the OA version and here’s the original). Here’s the abstract:

It is well established that context plays a fundamental role in how we learn and use language. Here we explore how context links short-term language use with the long-term emergence of different types of language systems. Using an iterated learning model of cultural transmission, the current study experimentally investigates the role of the communicative situation in which an utterance is produced (situational context) and how it influences the emergence of three types of linguistic systems: underspecified languages (where only some dimensions of meaning are encoded linguistically), holistic systems (lacking systematic structure) and systematic languages (consisting of compound signals encoding both category-level and individuating dimensions of meaning). To do this, we set up a discrimination task in a communication game and manipulated whether the feature dimension shape was relevant or not in discriminating between two referents. The experimental languages gradually evolved to encode information relevant to the task of achieving communicative success, given the situational context in which they are learned and used, resulting in the emergence of different linguistic systems. These results suggest language systems adapt to their contextual niche over iterated learning.

Background

Context clearly plays an important role in how we learn and use language. Without this contextual scaffolding, and our inferential capacities, the use of language in everyday interactions would appear highly ambiguous. And even though ambiguous language can and does cause problems (as hilariously highlighted by the ‘What’s a chicken?’ case), it is also considered to be communicatively functional (see Piantadosi et al., 2012). In short: context helps in reducing uncertainty about the intended meaning.

If context is used as a resource in reducing uncertainty, then it might also alter our conception of how an optimal communication system should be structured (e.g., Zipf, 1949). With this in mind, we wanted to investigate the following questions: (i) To what extent does the context influence the encoding of features in the linguistic system? (ii) How does the effect of context work its way into the structure of language? To get at these questions we narrowed our focus to look at the situational context: the immediate communicative environment in which an utterance is situated and how it influences the distinctions a speaker needs to convey.

Of particular relevance here is Silvey, Kirby & Smith (2014): they show that the incorporation of a situational context can change the extent to which an evolving language encodes certain features of referents. Using a pseudo-communicative task, where participants needed to discriminate between a target and a distractor meaning, the authors were able to manipulate which meaning dimensions (shape, colour, and motion) were relevant and irrelevant in conveying the intended meaning. Over successive generations of participants, the languages converged on underspecified systems that encoded the feature dimension which was relevant for discriminating between meanings.

The current work extends upon these findings in two ways: (a) we added a communication element to the setup, and (b) we further explored the types of situational context we could manipulate. Our general hypothesis, then, is that these artificial languages should adapt to the situational context in predictable ways based on whether or not a distinction is relevant in communication.

Method

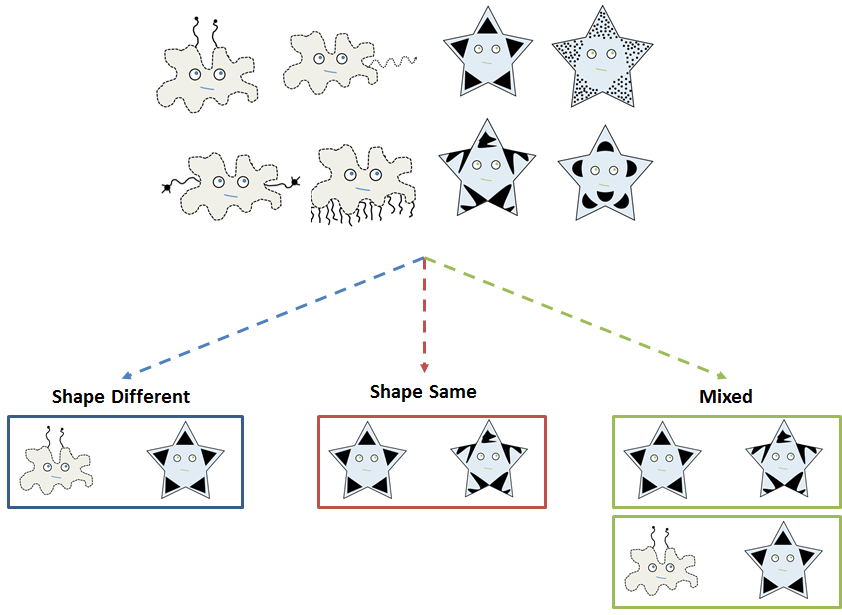

Using an artificial language paradigm, we experimentally simulate cultural transmission in a pair-based communication game setup. This involves training participants on a randomly generated artificial language which provides labels for a set of pictures [Side note: the initial languages were randomly generated strings made up from CV syllables. This meant that participants in the initial generation would see three unique strings for each meaning.]. There were a total of eight pictures that varied in shape (blobs or stars), with each referent also having a unique, idiosyncratic element.

After learning the language, participants play a series of communication games with their partner, taking turns to describe pictures for each other. We modified the situational context in which communication took place by manipulating whether the feature dimension of shape was relevant or not for the discrimination task. This gives us three conditions:

In the Shape Different condition, the situational context is constructed so that pairings of target and distractor always differ in shape. Conversely, in the Shape Same condition, target and distractor are always of the same shape — differing only on their idiosyncratic features. Lastly, for the Mixed condition we manipulated the predictability of situational contexts across trials: on some trials target and distractor share the same shape and on others they differ in shape.

Finally, these pairs of participants were arranged into transmission chains, such that the language produced during communication by the nth pair in a chain became the language that the n+1th pair attempted to learn. This method allows us to investigate how the artificial languages change and evolve as they are adapted to meet the participants’ communicative needs and/or as they are passed from individuals to individuals via learning. (N.B. If you’re not familiar with Iterated Learning studies, then you should probably check out a recent paper by Simon Kirby, Tom Griffiths and Kenny Smith (2014): Iterated learning and the evolution of language. )

Why do this study?

We went into this experiment with the idea that these subtle manipulations to context would influence the communicative strategies employed and subsequently impact how these systems were shaped over cultural evolution. Specifically, we argued for the following: (i) holistic languages (lacking systematic structure through only encoding the idiosyncratic features) would emerge where stimuli always shared the same shape and thus needed to be distinguished using the idiosyncratic components; (ii) underspecified languages (where only shape is encoded) would emerge when shape was always relevant in distinguishing between pairs of stimuli; and (iii) systematic languages (consisting of compound signals that encode both shape and the idiosyncratic features) would emerge when pairings of stimuli varied across trials: sometimes shape was relevant and sometimes the idiosyncratic feature was relevant for communicative success (and it was therefore advantageous for the system to encode both features).

You might be tempted to say at this stage that these hypotheses seem straightforward enough. So why bother doing the study? Well, sure, it’s a reasonable set of hypotheses, and builds upon previous research, but there are other competing hypotheses for what could have happened in this experiment. The first of these is the learning bias approach (see Griffiths & Kalish, 2007 for a characteristic example). This makes the prediction that language structure is closely coupled to the prior expectations and biases of language learners. Conversely, on the other end of the spectrum are accounts of historical contingency (e.g., Dunn et al., 2011 ). Exponents of this perspective hold that the types of systems that emerge are primarily constrained by random historical events, subtly biasing the language in one direction or another in a lineage-specific fashion. As we said in the paper:

In their extreme incarnations, the learning bias and historical contingency accounts both predict that manipulating the situational context will have little effect on the types of systems that emerge in our experiment. For a learning bias account we would predict considerable convergence across all experimental conditions: there will be a globally-optimal solution in terms of a prior constraint (or set of constraints), with the languages then converging towards this prior. By contrast, the historical contingency account would predict a much higher degree of variation in the types of systems that eventually emerge, with the states of these systems being better predicted by individual variation and lineages than by either contextual or prior cognitive constraints.

Results

Our general set of results support our initial premise that language structure does adapt to the situational contexts in which it is learned and used. Furthermore, despite evolving very different systems in the three conditions, all of the languages were perfectly adequate for the task of conveying the intended meaning during communication (all conditions pretty much reached 100% communicative success by the final generation).

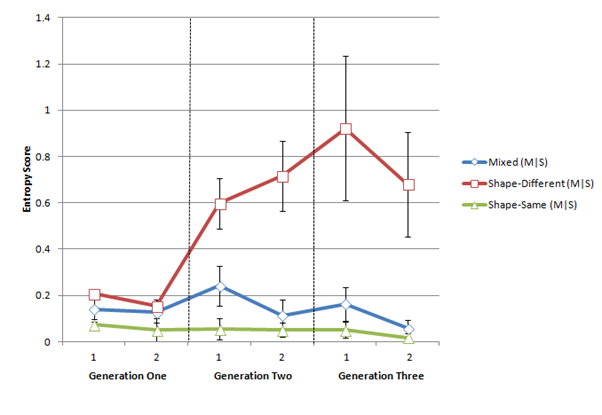

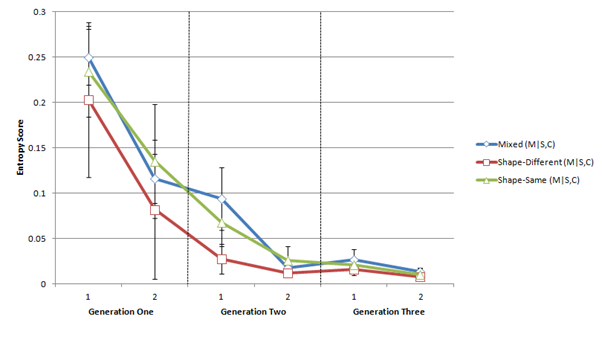

We did a considerable amount of qualitative and quantitative analysis (to find out more you can go and read the paper). But if I had to pick a money shot, then it’d be the conditional entropy:

Without going into too much detail (for a more technical overview, see the paper), we measured the expected entropy (i.e., uncertainty) for meanings given a signal both out of context, H(M|S), and in context, H(M|S,C). In a nutshell, the first graph shows that the underspecified systems which evolve in the Shape Different condition appear to increase in uncertainty over generations, with there being more one-to-many mappings of signals and meanings, whereas the second graph shows that these underspecified systems are communicatively functional (when you consider the context and how it interacts in reducing uncertainty). This supports the contention that ambiguity does not need to always be counter-functional for communication. On the contrary, “when context is informative, any good communication system will leave out information already in the context” (Piantadosi, Tily & Gibson, 2012: 284).

Summary

To summarise, I’ll liberally quote from our paper:

As we outlined in the introduction, some meaning is encoded and some meaning is inferred, with interactional short-term strategies of conveying the intended meaning feeding back into long-term, system-wide changes. In our experiment, languages gradually evolved to encode information relevant to the task of achieving communicative success in context, with different language systems evolving in each experimental condition. In the Shape-Same condition, where the dimension of shape was always the same for stimuli pairings, holistic systems of communication emerged, whilst in the Shape-Different condition, where the dimension of shape was always different for stimuli pairings, the system generalised and became underspecified (although unexpectedly variable: see discussion below). For the Mixed condition, which featured both Shape-Same and Shape-Different contexts, the systems that emerged were systematically structured: that is, both shape category and individual identity were encoded in the linguistic signal. These divergent systems arise given a very simple meaning space, through slight manipulations to the situational context.

So, if languages are adapting to their contextual niche, then what are the implications for the learning bias and historical contingency accounts?

Even though our results are broadly consistent with the ecologically sensitive account, there is also evidence consistent with the learning bias (e.g., pockets of systematicity in the Shape-Same condition and the overall reduction of synonymy across all conditions) and historical contingency (e.g., the emergence of a holistic language in chain 11 of the Shape-Different condition) accounts. It is likely that all these theoretical perspectives hold true to some extent, with the role of context being mediated by partially-competing motivations of prior learning biases and historical contingency. Such notions reflect the converging evidence that languages, and the way in which they are organised, “are better explained as stable engineering solutions satisfying multiple design constraints, reflecting both cultural-historical factors and the constraints of human cognition” (Evans & Levinson, 2009: 429).

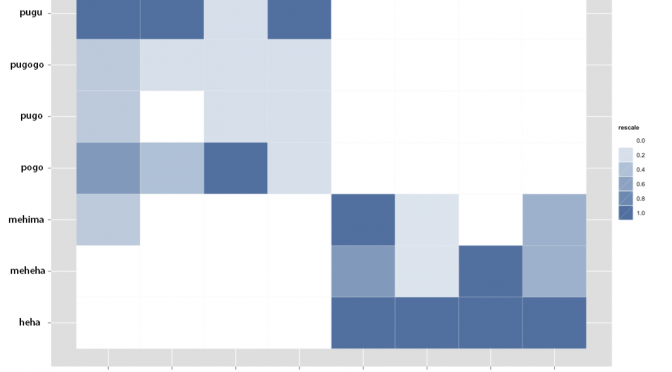

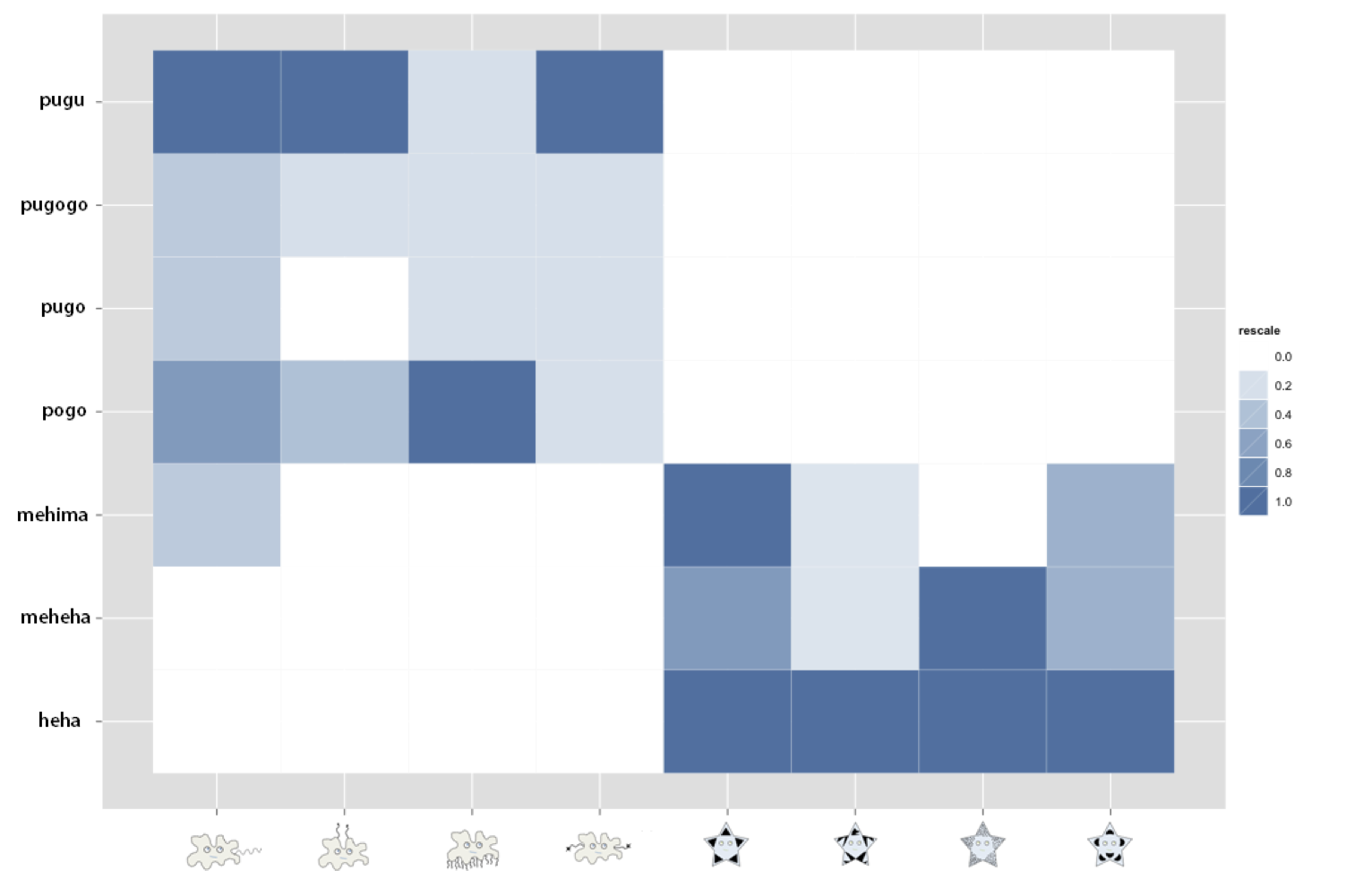

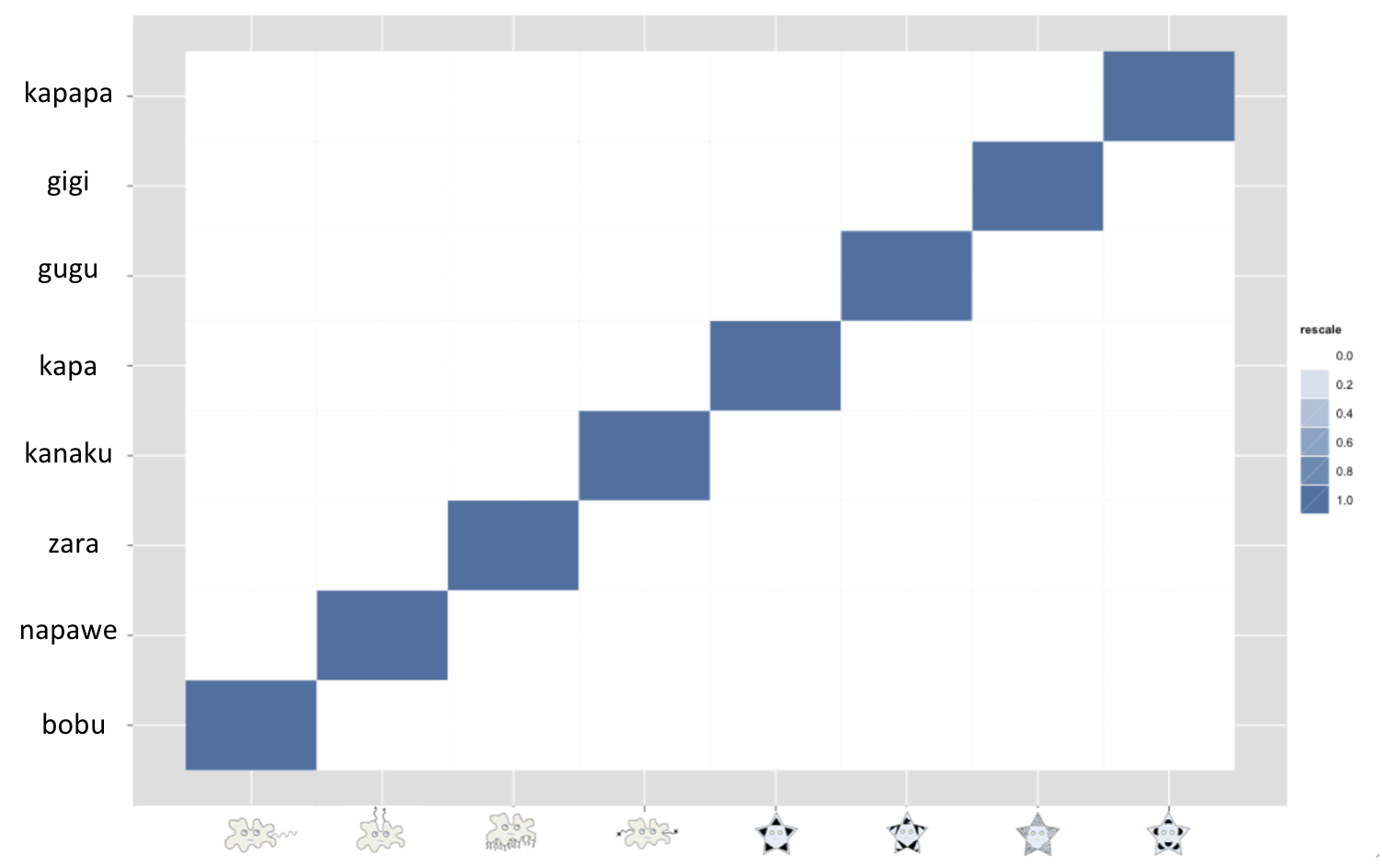

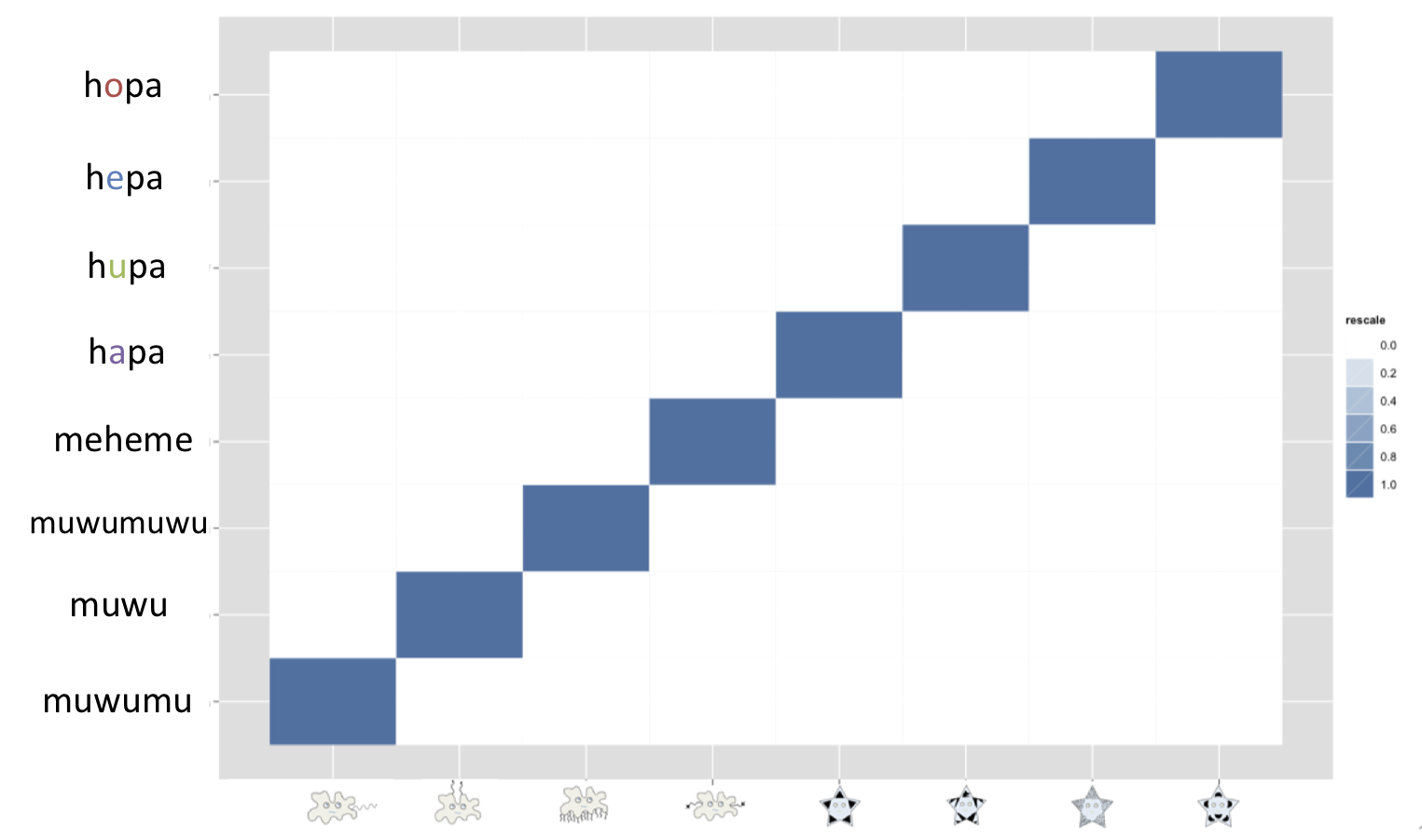

Bonus heatmaps

To show the types of languages that emerged by the end of the experiment I’ve created some bonus heatmaps (which didn’t make into the final cut of the paper):

References

WINTERS, J., KIRBY, S., & SMITH, K. (2014). Languages adapt to their contextual niche Language and Cognition, 1-35 DOI: 10.1017/langcog.2014.35

Silvey C, Kirby S, & Smith K (2014). Word Meanings Evolve to Selectively Preserve Distinctions on Salient Dimensions. Cognitive science PMID: 25066300

Piantadosi, S., Tily, H., & Gibson, E. (2012). The communicative function of ambiguity in language Cognition, 122 (3), 280-291 DOI: 10.1016/j.cognition.2011.10.004